Primer: Energy Systems, incentives, and models (Article)

Why you should care:

If you want to understand energy outcomes in the real world, you cannot stop at “which technology is best.” Energy systems are governed by hard constraints (physics, networks, timelines) and soft constraints that act just as hard (rules, institutions, contracts, incentives, human behavior). Models are how you test what must be true for a plan to work.

An energy system is not just “power plants” or “the grid.” It is the whole chain that turns physical resources into services people actually use, under rules that decide who can build, who gets paid, and who carries risk.

If you zoom out far enough, the system starts with primary energy resources (coal, natural gas, uranium, wind, sunlight, water flows), runs through conversion machines (turbines, boilers, photovoltaic cells, refineries, engines), and ends at the point where someone gets light, heat, motion, computation, or industrial output.

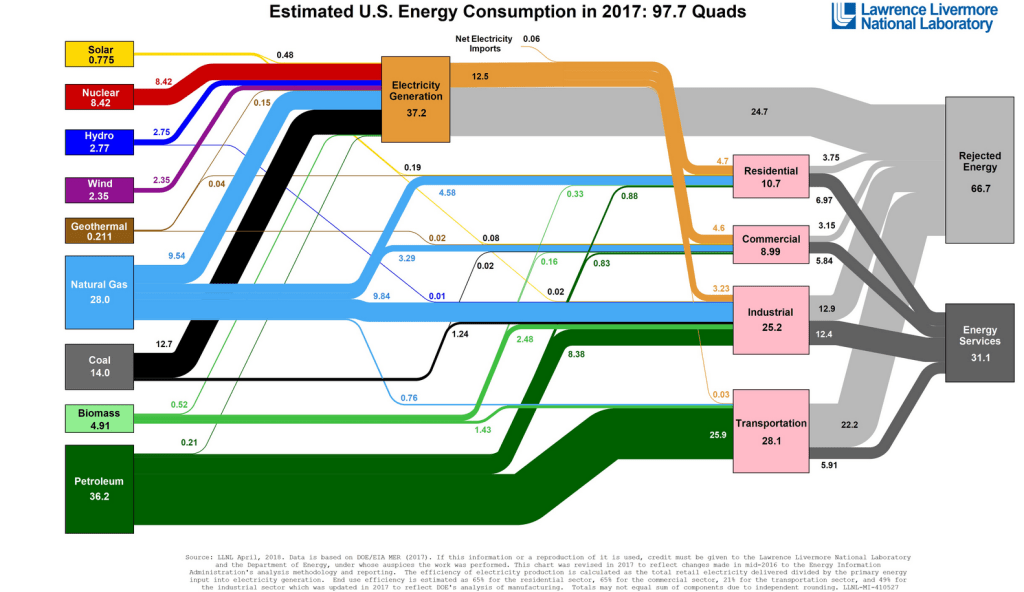

A useful way to see this is a national energy flow chart: one side shows inputs by source, the other side shows where it ends up by sector, with large blocks of “rejected energy” representing losses from conversion and use.

Electricity is only one slice of the broader energy system, but it is the slice where modeling often gets the most attention because it has tight real-time constraints. In 2023, the United States generated about 4,178 billion kilowatthours of electricity at utility-scale facilities, roughly 4.18 trillion kWh. About 60% of that came from fossil fuels, 19% from nuclear, and 21% from renewables. [2] Those numbers describe energy produced at generators, not energy that arrives as useful work at your device. Even in a well-run grid, some energy is lost moving power through wires and transformers. The EIA estimates that transmission and distribution losses averaged about 5% of electricity transmitted and distributed in 2018–2022. [3] That number is not a moral failure, just plain physics and the result of our historical infrastructure choices. That loss matters a lot for planning: a model that treats delivered electricity as identical to generated electricity silently assumes away a real, measurable wedge. On the scale of U.S. generation, 5% of 4.18 trillion kWh is on the order of 200 billion kWh per year, which is large enough that you can be wrong about system costs and emissions simply by forgetting where the meter is.

A clean mental anchor is the difference between power and energy, because it shows up everywhere in electricity systems and in the models built around them. Power is a rate (kW or MW). Energy is an amount accumulated over time (kWh or MWh). Think of how your car can use energy to go thirty miles (value), or it can have the power to go 30 miles and hour (rate).

A battery has both a power rating (how fast it can charge or discharge) and an energy capacity (how much it can store). If you confuse the two, you will build models that look plausible but fail under basic dimensional checks. A concrete example: Tesla’s Megapack 2 XL is marketed as a grid-scale battery product with an energy capacity around 3.9 MWh and a power output around 1.9 MW (product specs vary by configuration, but this “roughly two hours at full power” relationship is the point). [4]

If you ask whether a battery can “replace” a generator, a model has to answer two separate questions: can it meet the instantaneous peak (power), and for how long (energy). The incentives question sits on top of that: even if it can, who pays it to be available, and under what market rules?

That layering—physics first, then institutions—is the reason energy systems are model-heavy. An energy model is a simplified representation of an energy system designed to make assumptions explicit and computable. “Model” can mean a spreadsheet with a few equations, a dispatch simulation that solves hourly supply and demand, a power-flow calculation that checks whether lines overload, a capacity-expansion optimization that chooses what to build over decades, or a market simulation that recreates how bids clear and revenues land. The common feature is not sophistication; it is discipline. A model forces you to state what you think matters, quantify it, and live with the consequences.

Models are used because energy systems are constrained in ways that intuition routinely mishandles. Electricity must balance supply and demand continuously. Equipment has ramp rates and minimum run levels. Transmission lines have thermal limits. Weather-driven resources vary. Demand varies by hour and season. Fuel prices move. Regulations change. Interconnection queues bottleneck. None of this means the future is “predictable,” but it does mean some failures are predictable. Models are best treated as tools for testing assumptions, not as machines that predict the future. If you run a model and get a single “answer,” you are usually seeing a single path through a maze of assumptions you did not notice you made.

A practical model behaves more like a lab bench than an oracle. You pick an assumption, change it, and watch what breaks. If solar costs fall 20%, what changes? If a transmission upgrade takes five years instead of two, what fails to get built on schedule? If natural gas prices double, what happens to dispatch, and which plants become marginal? If a market caps scarcity prices, does investment move elsewhere? These are not abstract questions. They show up in real systems because the binding constraints change, and models help identify which constraints are binding under which conditions.

Technical energy models focus on the physical and operational side. They answer questions like: can the system meet load in every hour given generator availability and weather? Do power flows violate line limits under N-1 contingencies? What amount of storage reduces curtailment under a given solar buildout? How much firm capacity is needed to hit a reliability target? Technical models have the advantage of being anchored to conservation laws and engineering limits. They also have a predictable failure mode: they can produce technically feasible solutions that are institutionally impossible. A plan that requires transmission permits to clear in 18 months is “feasible” in math and impossible in the real world.

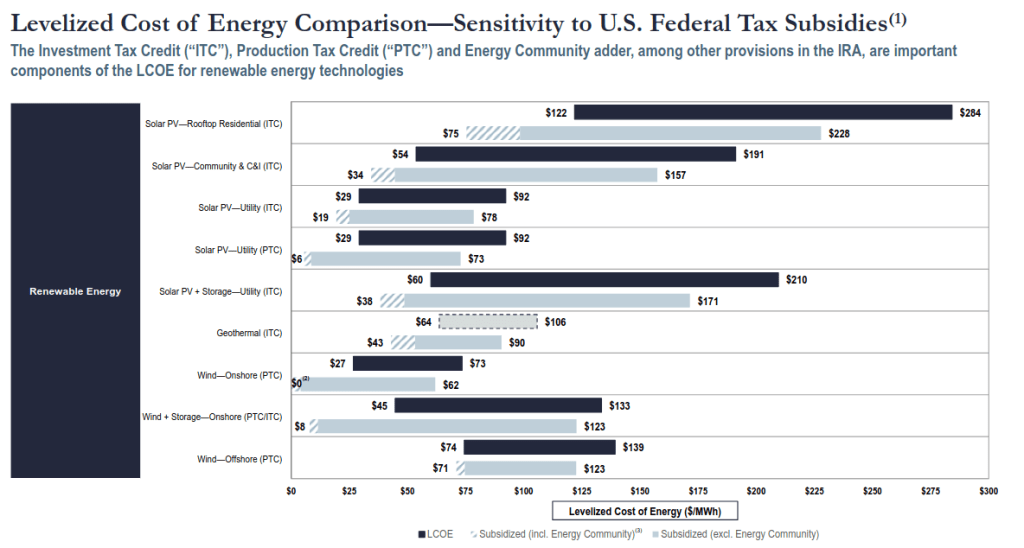

Economic energy models focus on costs, prices, and tradeoffs, often compressing a complex system into a few comparable metrics. The most widely used example is levelized cost of energy (LCOE), which converts lifetime costs and lifetime energy output into an all-in cost per MWh. LCOE is useful because it puts unlike things on a common scale, but it is also easy to abuse because it hides system context. Lazard’s LCOE+ report, for example, presents LCOE ranges for new-build generation technologies; in its June 2024 edition, it shows utility-scale solar PV and onshore wind as among the lowest-cost new-build options on an unsubsidized basis, while also showing wide ranges that depend on financing, resource quality, and project specifics. [5]

[PICTURE: LCOE bar chart showing overlapping ranges for utility solar, onshore wind, combined-cycle gas, peakers, etc., emphasizing that the output is a range, not a point.]